The first of a series of three posts inspired by Daniel Kahneman’s ‘Thinking, Fast and Slow’

Milgram’s (in)famous experiment is always great fun to teach. People quickly see the relevance of the study to their everyday lives and to some of the lessons of history and, let’s face it, they love how deeply unethical the whole thing is. However, another reason that students find the study so interesting is that the results are (for them) always extremely surprising. Whenever a new cohort of students learns the basic procedure for the experiment I always ask them the same question:

“If you had been a participant in Milgram’s experiment, would you have gone all the way to 450v?”

The answers are pretty consistent; it is rare that more than 10% of a given class think that they would have carried on to the bitter end.

So far this is hardly a surprising result, after all the survey of Psychology students and professionals that Milgram took before his original study predicted that only one in 1000 people would go that far! If anything, my classes seem to be a bit more realistic, although they still greatly underestimate the true obedience figure of around 65%, which Milgram found and which has been closely replicated in many studies all over the world since.

What is interesting is what comes after we’ve finished covering the study. This is when they get the same question again...

“Given what you know about the Milgram experiment, if you had been a participant, would you have gone all the way to 450v?”

The students know by this stage, of course, that in any given sample, roughly two thirds of people will obey up to 450v. They know that this result has been widely replicated. Presumably then, we would expect them to use that information to come up with a more informed judgement about whether or not they would have obeyed. Indeed the results do tend to show this... a bit! Usually in this second poll around 20-30% of students say that they might have showed full obedience, but that still leaves 70-80% of people who think they would be in the 35% of defiant participants! I’m no mathematician, but something clearly doesn’t seem to add up.

We are faced with something of a psychological problem. How do we explain this difference between what the students know are the results for everyone else and what they assume will be the results for them? Fortunately there are some clear psychological principles that can help us to explain this pattern. Rather more unfortunately, they do seem to have the worrying implication that teaching Psychology at all might be a waste of time!

Explanation 1 - The Fundamental Attribution Error (Ross, 1977)

The fundamental attribution error is a complicated name for a very simple thing. It is the tendency that we have to explain other people’s behaviour by reference to their character (he’s a jerk, she’s arrogant etc), whilst explaining our own behaviour with reference to the situation we’re in (the room was too cold, it was dark and I couldn’t see etc). In psychological terms, we overestimate the effect of dispositions and underestimate the effect of situations in explaining other people’s behaviour (and the opposite for ourselves). For example if we see the person next to us receive a bad mark in a test, we’re likely to jump to dispositional conclusions (“she’s stupid”). However, if a minute later we get out test back and we have scored the same mark, we are far more likely to find situational explanations for the result (“There was too much noise in the room and I didn’t sleep properly the night before).

Milgram’s (in)famous experiment is always great fun to teach. People quickly see the relevance of the study to their everyday lives and to some of the lessons of history and, let’s face it, they love how deeply unethical the whole thing is. However, another reason that students find the study so interesting is that the results are (for them) always extremely surprising. Whenever a new cohort of students learns the basic procedure for the experiment I always ask them the same question:

“If you had been a participant in Milgram’s experiment, would you have gone all the way to 450v?”

The answers are pretty consistent; it is rare that more than 10% of a given class think that they would have carried on to the bitter end.

So far this is hardly a surprising result, after all the survey of Psychology students and professionals that Milgram took before his original study predicted that only one in 1000 people would go that far! If anything, my classes seem to be a bit more realistic, although they still greatly underestimate the true obedience figure of around 65%, which Milgram found and which has been closely replicated in many studies all over the world since.

What is interesting is what comes after we’ve finished covering the study. This is when they get the same question again...

“Given what you know about the Milgram experiment, if you had been a participant, would you have gone all the way to 450v?”

The students know by this stage, of course, that in any given sample, roughly two thirds of people will obey up to 450v. They know that this result has been widely replicated. Presumably then, we would expect them to use that information to come up with a more informed judgement about whether or not they would have obeyed. Indeed the results do tend to show this... a bit! Usually in this second poll around 20-30% of students say that they might have showed full obedience, but that still leaves 70-80% of people who think they would be in the 35% of defiant participants! I’m no mathematician, but something clearly doesn’t seem to add up.

We are faced with something of a psychological problem. How do we explain this difference between what the students know are the results for everyone else and what they assume will be the results for them? Fortunately there are some clear psychological principles that can help us to explain this pattern. Rather more unfortunately, they do seem to have the worrying implication that teaching Psychology at all might be a waste of time!

Explanation 1 - The Fundamental Attribution Error (Ross, 1977)

The fundamental attribution error is a complicated name for a very simple thing. It is the tendency that we have to explain other people’s behaviour by reference to their character (he’s a jerk, she’s arrogant etc), whilst explaining our own behaviour with reference to the situation we’re in (the room was too cold, it was dark and I couldn’t see etc). In psychological terms, we overestimate the effect of dispositions and underestimate the effect of situations in explaining other people’s behaviour (and the opposite for ourselves). For example if we see the person next to us receive a bad mark in a test, we’re likely to jump to dispositional conclusions (“she’s stupid”). However, if a minute later we get out test back and we have scored the same mark, we are far more likely to find situational explanations for the result (“There was too much noise in the room and I didn’t sleep properly the night before).

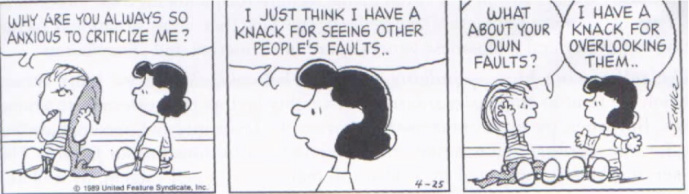

The fundamental attribution error - as illustrated by Charles Schultz's 'Peanuts' cartoons

The fundamental attribution error - as illustrated by Charles Schultz's 'Peanuts' cartoons Explanation 2 - Neglect of base rates

In 1975 Psychologists Richard Nisbett and Eugene Borgida conducted an experiment in which participants were told the results of a famous ‘helping experiment’ (Darley and Latane, 1968). The helping experiment found that only 4 out of 15 people (27%) went to help another participant (actually a confederate) who they thought was having a seizure. Nisbett and Borgida’s test was very simple; they told participants the results of this study and then asked them if they would have helped. The assumption was that, having been given the normal frequency of helping behaviour (the base rate), the participants would use this in their own decisions, probably coming up with a lower estimate than they might have done otherwise. Nisbett and Borgida found that... they didn’t. In fact the predictions of the experimental group were no different to those of a control group who hadn’t been given the base rates at all; well over half of participants said they’d help. The extra information made no difference! People simply didn’t use it when coming to their decision.

These two phenomena are very closely related; indeed, one may cause the other (base rate neglect may cause the fundamental attribution error, or vice versa), but they are both very useful tools for looking again at the results in my Milgram surveys. In my classes on Milgram, just as for Nisbett and Borgida, the base rate of ‘what most people do’ seems to be completely ignored when people have to decide ‘what would I do?’ Perhaps this is the result of the fundamental attribution error, where we still can’t help but think of the obedient subjects as ‘cruel’ or ‘submissive’ or even just ‘weird’ (even though we know deep down that they were just normal people like us). Either way, the end result seems to be that every time we are confronted with the question of what we would do as individuals, we ignore any evidence from what other people normally do and assume that we are different.

As a psychologist, this is an interesting finding, but it is also a slightly depressing one. Why? Because it seems to imply that learning about psychological facts has absolutely no impact at all upon our actions or decision making! This is a deeply worrying thought. As teachers, although we spend more of our lives than we would like banging on about exams, in truth most of us are actually hoping to give you something more than good grades and a lifelong habit of underlining the date and title. We hope, perhaps naively, that people might leave out classes with ‘life skills’, ideas for different ways of thinking and acting that could benefit you long after you leave school. As Psychology teachers, this is especially true, given that so many of the topics we cover are of direct relevance to people’s lives.

What the consistent surprise at the Milgram experiment’s results, as well as studies like Nisbett and Borgida’s, seem to show, however, is that we are wasting our time. You can write as many essays and learn as many details about the Milgram experiment as you want, but when it comes down to it in real life, when you actually test whether someone’s way of thinking or behaving has been altered by the information we’ve passed on, nothing’s changed!

So there you have it. The reasons why Milgram’s results are still so surprising today. As a psychologist, fascinating; as a teacher, terrifying! Do you agree? Has a lesson you’ve had, teacher you’ve liked or a topic you’ve studied ever changed the way you think? Comment below and stop me from getting depressed!

In 1975 Psychologists Richard Nisbett and Eugene Borgida conducted an experiment in which participants were told the results of a famous ‘helping experiment’ (Darley and Latane, 1968). The helping experiment found that only 4 out of 15 people (27%) went to help another participant (actually a confederate) who they thought was having a seizure. Nisbett and Borgida’s test was very simple; they told participants the results of this study and then asked them if they would have helped. The assumption was that, having been given the normal frequency of helping behaviour (the base rate), the participants would use this in their own decisions, probably coming up with a lower estimate than they might have done otherwise. Nisbett and Borgida found that... they didn’t. In fact the predictions of the experimental group were no different to those of a control group who hadn’t been given the base rates at all; well over half of participants said they’d help. The extra information made no difference! People simply didn’t use it when coming to their decision.

These two phenomena are very closely related; indeed, one may cause the other (base rate neglect may cause the fundamental attribution error, or vice versa), but they are both very useful tools for looking again at the results in my Milgram surveys. In my classes on Milgram, just as for Nisbett and Borgida, the base rate of ‘what most people do’ seems to be completely ignored when people have to decide ‘what would I do?’ Perhaps this is the result of the fundamental attribution error, where we still can’t help but think of the obedient subjects as ‘cruel’ or ‘submissive’ or even just ‘weird’ (even though we know deep down that they were just normal people like us). Either way, the end result seems to be that every time we are confronted with the question of what we would do as individuals, we ignore any evidence from what other people normally do and assume that we are different.

As a psychologist, this is an interesting finding, but it is also a slightly depressing one. Why? Because it seems to imply that learning about psychological facts has absolutely no impact at all upon our actions or decision making! This is a deeply worrying thought. As teachers, although we spend more of our lives than we would like banging on about exams, in truth most of us are actually hoping to give you something more than good grades and a lifelong habit of underlining the date and title. We hope, perhaps naively, that people might leave out classes with ‘life skills’, ideas for different ways of thinking and acting that could benefit you long after you leave school. As Psychology teachers, this is especially true, given that so many of the topics we cover are of direct relevance to people’s lives.

What the consistent surprise at the Milgram experiment’s results, as well as studies like Nisbett and Borgida’s, seem to show, however, is that we are wasting our time. You can write as many essays and learn as many details about the Milgram experiment as you want, but when it comes down to it in real life, when you actually test whether someone’s way of thinking or behaving has been altered by the information we’ve passed on, nothing’s changed!

So there you have it. The reasons why Milgram’s results are still so surprising today. As a psychologist, fascinating; as a teacher, terrifying! Do you agree? Has a lesson you’ve had, teacher you’ve liked or a topic you’ve studied ever changed the way you think? Comment below and stop me from getting depressed!

RSS Feed

RSS Feed